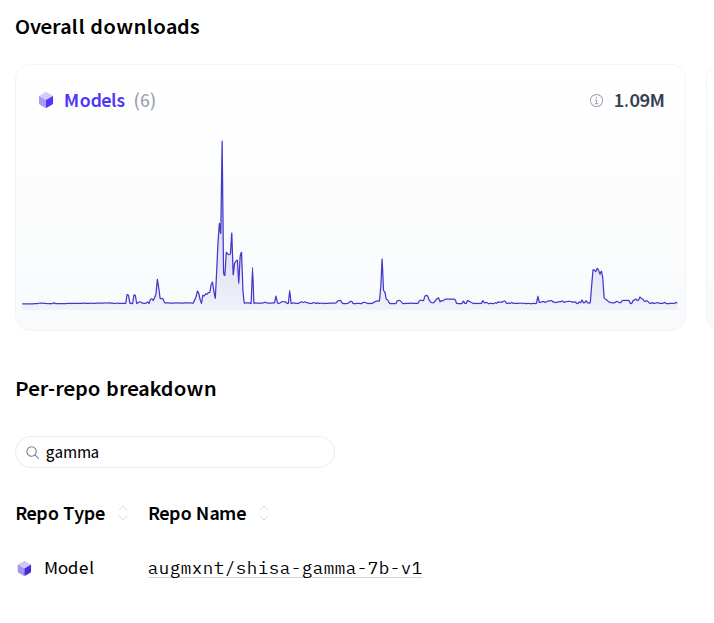

Shisa-Gamma-7b-v1 Surpasses 1 Million Downloads

One year after its role in pioneering evolutionary model merges, our model reaches a significant milestone.

It has been exactly one year since Sakana AI published their landmark research on Evolutionary Model Merges of LLMs, which utilized our shisa-gamma-7b-v1 as the Japanese backbone model. We were honored to see this work featured in Nature Machine Intelligence this past January.

The success of this research, combined with the model’s sustained performance on the Weights & Biases Nejumi LLM Leaderboard, has driven significant interest, resulting in over 1 million downloads to date.

As we look toward the future, we invite you to explore our latest advancement: the shisa-v2-llama3.1-8b-preview. This model represents our most capable release yet, featuring enhanced instruction following, superior creative writing, and a more efficient tokenization strategy.

We extend our gratitude to Mistral AI, Stability AI Japan, and the open-source AI community for their contributions, which have been instrumental to our progress.

More from the newsroom

Shisa 7B released

A bilingual general-purpose chat model using a synthetic-data driven approach.

Read moreShisa.AI Develops Multilingual LLM with Industry-Leading Performance

Releasing an open-source 405B parameter model that surpasses GPT-4 in Japanese language tasks.

Read more